Part 1: The Big Picture · See the whole mountain first

Chapter 1

Recalibrating Your Expectations

Your mental image of a robot probably looks something like this: it understands speech, opens doors on its own, does backflips on a battlefield, and carries your coffee while making small talk.

Thanks, Hollywood.

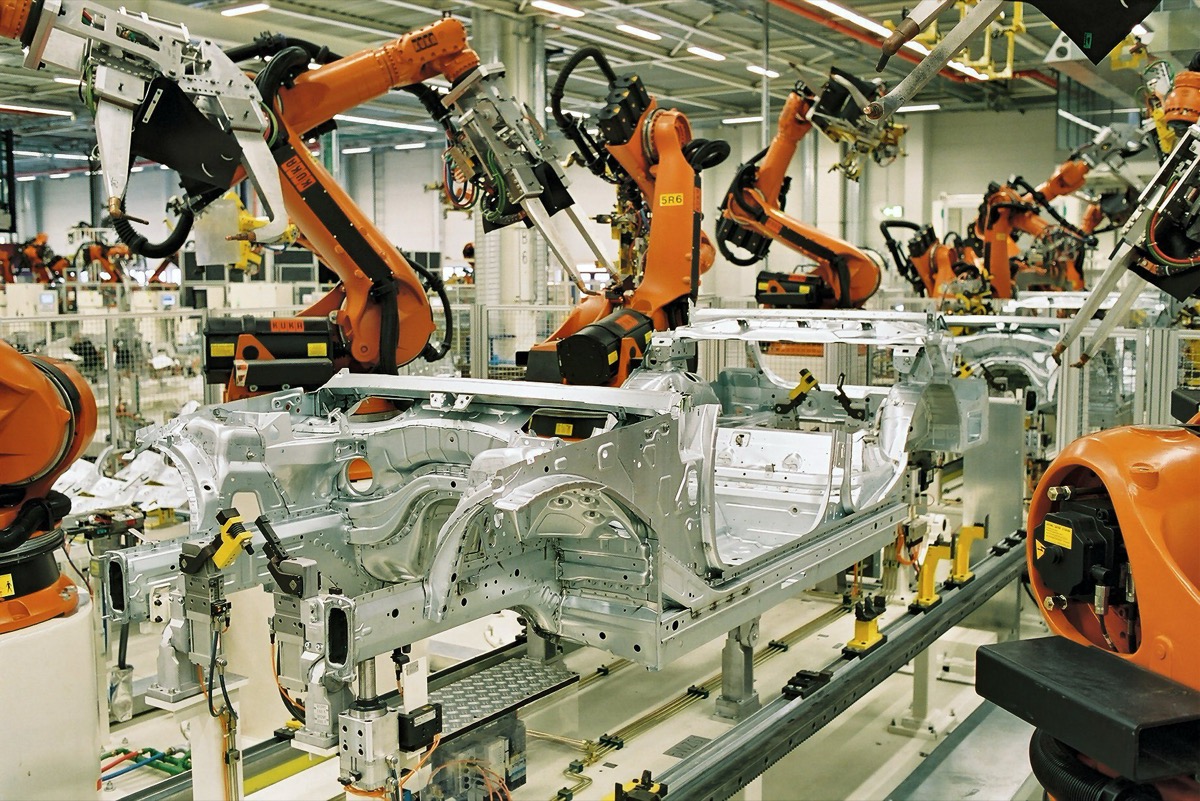

In reality, most robots that actually do useful work look like this — rows of robotic arms in factories, welding, painting, and moving parts around the clock. Impressive at scale, sure, but there's zero intelligence here. Every motion is a pre-programmed trajectory replay. Move it to a different workstation and you have to re-teach the whole thing from scratch.

Or they look like this — a boxy machine shuffling pallets along fixed routes on a warehouse floor. It doesn't chat. It doesn't know you. If a stray cardboard box shows up in its path, it might route around it — or it might just stop and wait for someone to come move the box.

Hollywood showed you the destination but skipped everything in between. Going from "follow a fixed route to move pallets" to "find your own path, dodge obstacles, and hold a conversation" — each step is its own engineering battleground.

This chapter lays those battlegrounds out — what a working robot is actually made of, and what problem each piece solves.

What Actually Makes Up a Working Robot?

Let's start with the most basic question: what's the minimum a robot needs to complete a task in the real world?

Imagine you're building an intern. This intern needs to walk into a warehouse, locate a water bottle, pick it up, and deliver it to the packing station.

It needs four things:

A body. A mobile base that can move, arms that can grab, a frame that can take a hit. This is the hardware layer — motors, gearboxes, joints, wheels, grippers. Without a body, every algorithm is castles in the sky. If you're coming from AI, this is the layer you're most likely to underestimate, because all your previous work happened on GPUs. Physical-world constraints are new territory.

A nervous system. The body parts need to talk to each other. The camera spots the water bottle — that information needs to reach the brain. The brain makes a decision — that command needs to reach the arm. This is what ROS 2 does. It's not really an "operating system" in the traditional sense — think of it more as the communication bus inside the robot. Dozens of modules run simultaneously, exchanging data through topics, services, and actions. If it helps, think of it as a very elaborate pub/sub messaging system.

A cerebellum. Responsible for motor control. The brain says "reach for the third shelf." The cerebellum translates that into "rotate joint 1 by 30 degrees, joint 2 by -15 degrees, joint 3 by 72 degrees..." This involves inverse kinematics (IK), trajectory planning, and force control. It's not "smart," but it has to be fast and precise. Your biological cerebellum doesn't do any thinking either, but it's the reason you can hold a cup of water without spilling it.

A brain. Perception + decision-making. Perception transforms raw sensor data (pixels, point clouds, IMU readings) into meaningful information — "there's a red bottle 2.3 meters ahead, tilted 15 degrees to the left." Decision-making picks the next action based on that information — "navigate to the shelf, then reach and grasp, then navigate to the packing station." This is the layer AI developers know best, but you'll quickly discover that running perception on a robot is nothing like running inference on a server: your latency directly determines whether the robot crashes into a wall.

Each of these four layers has its own tech stack, its own pitfalls, its own community. A "working" robot means all four layers are functioning and in sync. Drop any one, and nothing works.

This is why robotics is harder than pure software: you're not optimizing a single pipeline. You're simultaneously coordinating four systems, each with physical constraints. If any one layer fails, the entire machine stops.

Moravec's Paradox: Why Folding Laundry Is a Million Times Harder Than Writing a Paper

In 1988, AI researcher Hans Moravec noticed something deeply counterintuitive:

It is comparatively easy to make computers exhibit adult-level performance on intelligence tests or playing checkers, and difficult or impossible to give them the skills of a one-year-old when it comes to perception and mobility.

This is Moravec's Paradox.

GPT-4 can pass the bar exam. But as of today, no robot can reliably fold a full basket of laundry.

Because "folding laundry," once you break it down, is absurdly complex:

Perception. Clothing is a deformable object — its shape changes every second. You can't slap a fixed 3D bounding box on it like you would a rigid object. Visual features shift wildly with every wrinkle, lighting change, and color variation. Depth cameras produce extremely noisy data on fabric surfaces.

Manipulation. Just picking up a floppy T-shirt is already hard. You need precise gripper force control (too much and you'll tear it, too little and it slides out), plus you're dealing with complex friction dynamics between the fabric and the gripper. Spreading it flat, folding it in half, folding it again? Each step requires coordinated bimanual fine-force control, and every piece of clothing has different material, size, and initial state.

Planning. The goal "fold it neatly" is inherently vague — how neat is neat enough? Different garments fold differently. You can't hardcode a fixed action sequence because the input conditions are different every time.

Writing a paper, on the other hand? Everything happens in token space. Input: tokens. Output: tokens. Computation happens on GPUs with no physical constraints, no sensor noise, no execution error, no real-time requirements. If it fails, you just regenerate.

This is the single biggest mental model shift for AI developers entering robotics: you used to solve information-processing problems; now you're solving physical-interaction problems. Information processing is forgiving — you can retry. Physical interaction is not. The robot hit the wall. The cup is already on the floor.

This is also why the robotics industry has never lacked jaw-dropping demos but is starved for products that actually hold up in deployment. In a lab, you control the lighting, fix the object positions, use top-tier hardware, run fifty trials, and clip the one success into a video — that's a demo. Getting the same robot to run twelve hours a day across twenty different warehouses, each with different shelf layouts, floor surfaces, lighting conditions, and Wi-Fi signal strength, without failing — that's production. Between the two lies a massive chasm.

So What Can Robots Actually Do in 2026?

Here's a cheat sheet, so you don't have to find out the hard way.

Going Well

Navigation and transport in structured environments. Warehouse AGVs and AMRs are by far the most commercially mature robotics application. Routes are relatively fixed, environments are controlled, tasks are repetitive. Amazon runs hundreds of thousands of Kiva robots in its fulfillment centers. Geek+, HAI Robotics, and others are doing the same in China. This segment has moved past "does it work?" and into "how do we make it cheaper?"

Industrial pick-and-place with fixed poses. Parts on an assembly line sit in known positions, come in limited varieties, and have clean backgrounds. Traditional industrial robots (FANUC, KUKA, ABB) have been doing this for decades. With 2D vision guidance, pick-and-place success rates hit 99.9%.

Inspection in controlled environments. Following preset routes through data centers, power facilities, or pipelines — taking photos, detecting anomalies. Low environmental variability, highly repetitive tasks.

Making Progress, But Not There Yet

Navigation in unstructured environments. Getting a robot to roam freely through a shopping mall it has never seen — dodging strollers, routing around temporary signage, handling reflective marble floors. Nav2 handles basic dynamic obstacle avoidance, but the edge cases are endless and reliability is nowhere near production-ready.

General-purpose grasping. Picking up objects of varying shape, material, and weight. Especially deformable items (bagged food, cables), transparent objects (glass bottles), and reflective objects (metal parts). Foundation model approaches (FoundationPose, GraspVLA, etc.) are pushing the frontier, but there's still a gap between demo and reliable deployment.

Basic locomotion for humanoid robots. Unitree H1/G1, Figure, 1X — these humanoids can walk, run, and perform basic manipulations. RL-trained gait control is improving rapidly. But the gap between "it can walk" and "it can do useful work in a real scenario" isn't about locomotion — it's about the complete loop of perception, decision-making, and reliability.

Still a Long Way Off

True general manipulation. Using both hands to perform complex, dexterous tasks the way humans do — folding laundry, assembling furniture, cooking. Dexterous hand hardware is improving, imitation learning demos keep getting more impressive, but robustness and generalization are still lacking.

Extended autonomous operation in open environments. Operating for hours in a completely unknown environment with no human intervention. This requires perception, navigation, manipulation, error recovery, battery management, and communication — all stable and coordinated. No commercial system achieves this today.

Natural-language-driven general task execution. "Clean up the kitchen." That means the robot needs to understand what "clean" means, identify every object that needs handling, plan the sequence, and deal with every curveball — the dishwasher is full, the trash bags ran out. LLM + VLA research is hot, but this goal remains distant.

What Does This Mean for You?

If you're an AI developer thinking about getting into robotics, here's the takeaway from this chapter:

The opportunity is real. The AI transformation of this industry is just getting started. Many traditional robotics bottlenecks — perception, decision-making, parameter tuning, exception handling — are being redefined by AI methods. The foundation-model thinking, data-driven approach, and rapid iteration mindset that AI developers bring are genuinely scarce in this field.

But respect the complexity. It's not as simple as "deploy a model to a Jetson." Every line of inference code you write is connected to a physical entity with mass, inertia, and the ability to crash into things. Latency isn't a UX issue anymore — it's a safety issue. An edge case doesn't mean returning an error message — it means the robot just smashed a piece of equipment.

Start with a small, closed-loop problem. Don't try to build a "general-purpose humanoid robot" on day one. Find a specific scenario, a specific robot, a specific task, and get the perception → decision → action → feedback loop working end to end. Getting one robot to reliably complete one task in one warehouse is worth a hundred times more than ten flashy demos.

In the chapters ahead, we'll start with the full-stack map and work our way through this mountain, layer by layer.